OUTSCHOOL

The Mix-Up Machine: Helping Kids Discover What They Love to Learn

TL;DR

Outschool's model depends on intrinsic motivation, but most kids struggle with the blank-page problem when asked what they want to learn, limiting engagement and discovery.

Through rapid prototyping with ChatGPT and Loveable, I discovered that kids are excited to discover new interests through combining existing interests, they light up when quizzes are exploratory rather than evaluative, and will eagerly favorite classes connected to subjects they discover themselves.

I chose a layered validation approach over a native build, getting early signal in two days and progressively increasing fidelity to de-risk every assumption before committing engineering time.

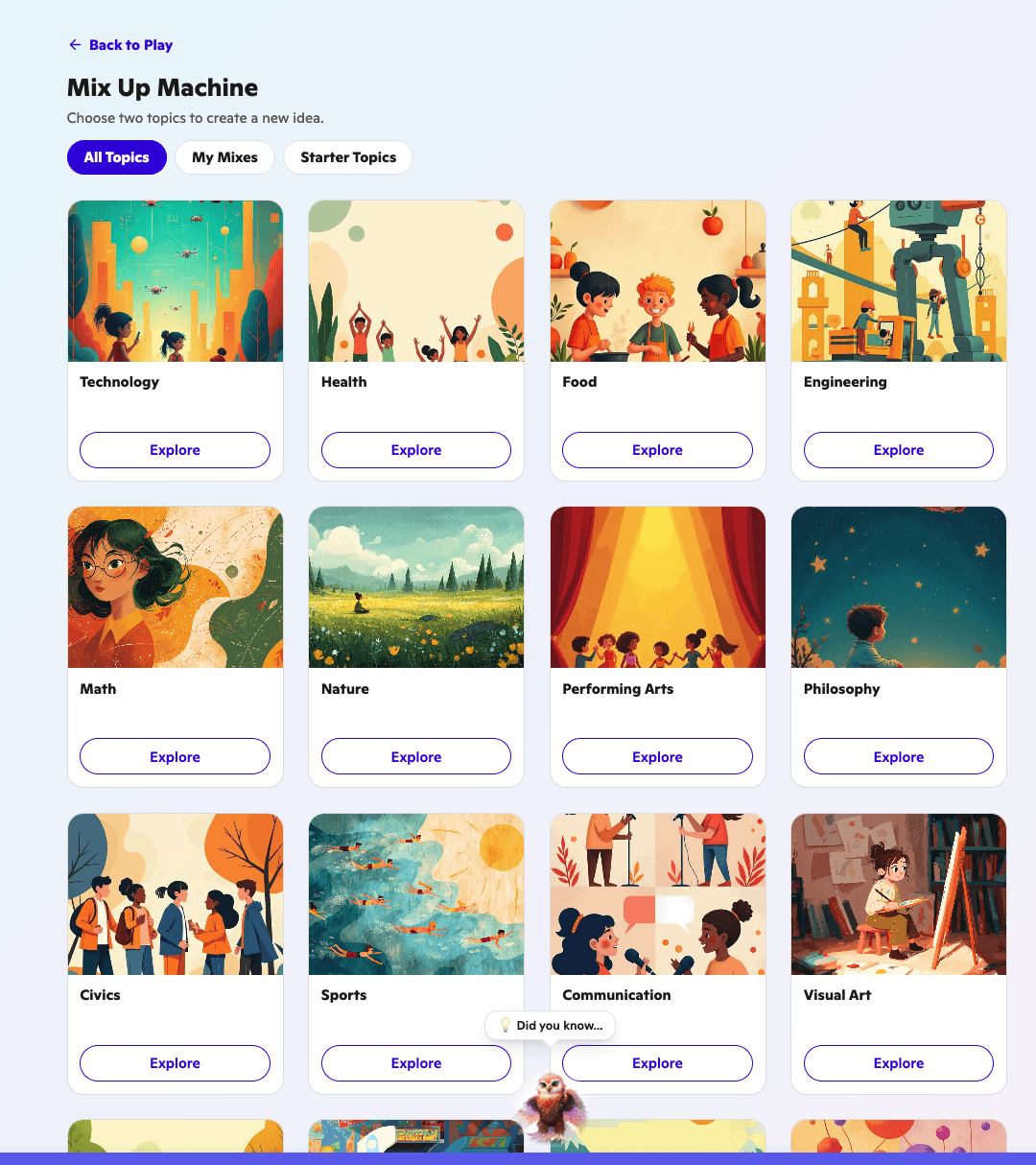

We shipped the Mix-Up Machine, a feature where learners combine two subjects to discover new topics, take exploratory quizzes, and save classes to their favorites.

Learners averaged 3 quizzes per session with an 80% completion rate, making it the highest-engaging feature in the learner experience outside of enrolled classes.

The Problem

Kids don't know what they're interested in, and that breaks everything

Every edtech company is trying to solve motivation. Most reach for extrinsic levers like grades, badges, or streaks. Outschool's bet is different: use live teachers and a learner's natural curiosity to drive engagement.

But there is a fundamental flaw in that model. When you ask a kid "what do you want to learn?" most of them shrug and say "I don't know," or they default to what adults have told them to be interested in. This is the blank-page problem, and it was quietly undermining Outschool's core value proposition.

For the platform to succeed, learners needed to figure out what actually made them light up, but there was no mechanism to help them do that. This was Outschool's first attempt in several years to create an engaging experience for learners outside of the classroom.

The Context

A small team, a greenfield problem, and no time to waste

Outschool is an online learning marketplace connecting kids with live teachers across thousands of subjects, serving around 24,000 monthly active learners at the time. I was a PM on the team responsible for the learner experience, and my first task was identifying the blank-page problem as the core product challenge to solve. Our team was small: myself, one designer, and three engineers.

We had a couple of months to deliver something meaningful. Because this was a greenfield exploration, we could not spend weeks on research before showing results. We needed to validate as we went.

One rule we established early was that we should never presume something engaging for adults would be engaging for kids, or vice versa. Every idea had to be tested with actual learners as fast as possible.

Decision

Speed to signal over polished builds

With the core problem identified, I faced a key choice: build a native flow inside the learner app, or find the fastest possible way to test whether the concept was even interesting to kids. Building natively would have taken weeks before we had any signal, and we had no evidence the idea would resonate. So I chose a layered prototyping approach, starting with the cheapest possible test and increasing fidelity only as confidence grew.

Option A: Build a native feature from scratch:

This would produce a polished experience but would consume weeks of engineering time before we learned whether the core loop was engaging for kids at all.

Option B: Layer prototypes from low to high fidelity:

Start with a ChatGPT-based prototype to test the core mechanic internally, progress to a Loveable UI prototype for unmoderated user testing, then instrument the Loveable prototype in the live app before committing to a full build.

What I chose and why:

I chose Option B. In two days I built a custom GPT with basic heuristics: give it two subjects and it generates a combined topic. I shared it with employees to try with their kids and got early signal that the mixing mechanic was genuinely fun. This gave us confidence to invest in the next layer without burning engineering capacity. We gave up polish and consistency with the design system in the early stages, but we gained weeks of learning.

Insight

Kids love quizzes when quizzes stop being tests

Because of the layered approach, each round of testing surfaced something new. When we brought the Loveable prototype to usertesting.com for three days of unmoderated sessions with parents and learners aged 10 to 14, we confirmed the central mixing loop was engaging and that parents saw value in the concept. But the real turning point came after we instrumented the prototype and launched it to actual learners inside the app.

We discovered that learners loved the exploratory quizzes, not just completed them, but wanted to take them over and over again. This was a surprise. Quizzes in education are almost universally evaluative, creating anxiety rather than curiosity.

But when a quiz is framed as exploration rather than assessment, the dynamic completely flips. We also found that learners readily favorited classes connected to their discovered subjects even when class names were minimal, just the subject name plus "intensive" or "workshop." The subject match alone was enough to drive intent.

The Solution

The Mix-Up Machine: discovery through play

Building on these insights, we designed and shipped the Mix-Up Machine. The experience lets learners pick two subjects they are curious about and generates a blended topic at the intersection. From there, they can explore the new subject, take exploratory quizzes to dig deeper, and favorite related classes.

The whole flow is built around play rather than instruction. There is no right answer, no evaluation, just a prompt to be curious and see what happens when two ideas collide. Our designer refined the Loveable prototype to align with Outschool's design system and component library, and engineering rebuilt the feature properly in about three weeks.

We launched in time for the January semester. The Mix-Up Machine gave Outschool something it had not had in years: a reason for learners to come back to the app between classes, and a mechanism that helps them articulate interests they did not know they had.

Here's what I owned

As the PM on this team, I identified the blank-page problem as the core challenge, framed it for the team, and led the strategy for how we would solve and validate it. I built the initial ChatGPT prototype myself in two days, including the heuristics for subject mixing, and ran the first informal tests with employees and their kids. I developed the rules and principles for the experience that guided subsequent iterations.

I also co-designed the instrumentation strategy and collaborated with engineers to build the analytics dashboard in Metabase. Throughout the project I drove the validation cadence, deciding when we had enough signal to progress from one fidelity layer to the next, and when to commit to the full build.

Impact

Increased Learner Engagement

As a result of the launch, we saw a steady climb in learner engagement through the January semester. Among learners who engaged with the feature, approximately 1,000 out of 24,000 monthly active users at the time, the numbers were striking.

Learner engagement: Learners averaged 3 quizzes per session with an 80% completion rate. The Mix-Up Machine became the highest-engaging feature in the learner experience outside of enrolled classes.

Adoption and next steps: About 5% of all learners in the app engaged with the feature at launch. With engagement validated as strong, the team's next priority shifted to driving more learners to the experience.

Strategic impact: This was Outschool's first successful attempt in several years to create a compelling learner experience outside the classroom. While we did not have enough time to establish a direct connection between engagement and retention, there was an existing relationship between in-classroom engagement and retention, giving us confidence the connection would hold.

Collaboration

Built with a small team that punched way above its weight

This project worked because five people trusted each other to move fast without cutting corners on quality and rigor. Our designer built the research plan, ran the unmoderated testing sessions, evaluated the results, wrote up findings, and continued iterating on the Loveable prototype based on what we learned. She also brought the final design into alignment with Outschool's design system.

Our engineers figured out an inventive process to copy the Loveable prototype directly into production code and instrument it with analytics, giving us real learner data without waiting for a full rebuild. We collaboratively built the instrumentation dashboard in Metabase so the whole team could watch the data come in together. I am genuinely grateful for this team.

The designer's instinct for turning research into clear design direction kept us honest, and the engineers' willingness to find creative shortcuts meant we never had to choose between speed and real data. Shipping this in under three months with three engineers, one designer, and one PM is something I remain extremely proud of.